Microglia in Mice and Men

A hot debate has broken out among scientists who study microglia, the glorious cells best known as the soldiers of the brain’s immune system. OK, “debate” may be a strong word; it’s really just a series of gentlemanly letters published over the last couple of months in a neuroscience journal you’ve probably never heard of. Still, I think the debate is worth highlighting not only because I’m an unabashed fan of microglia, but because it raises one of the most pressing issues facing medical research: the differences between mice and people.

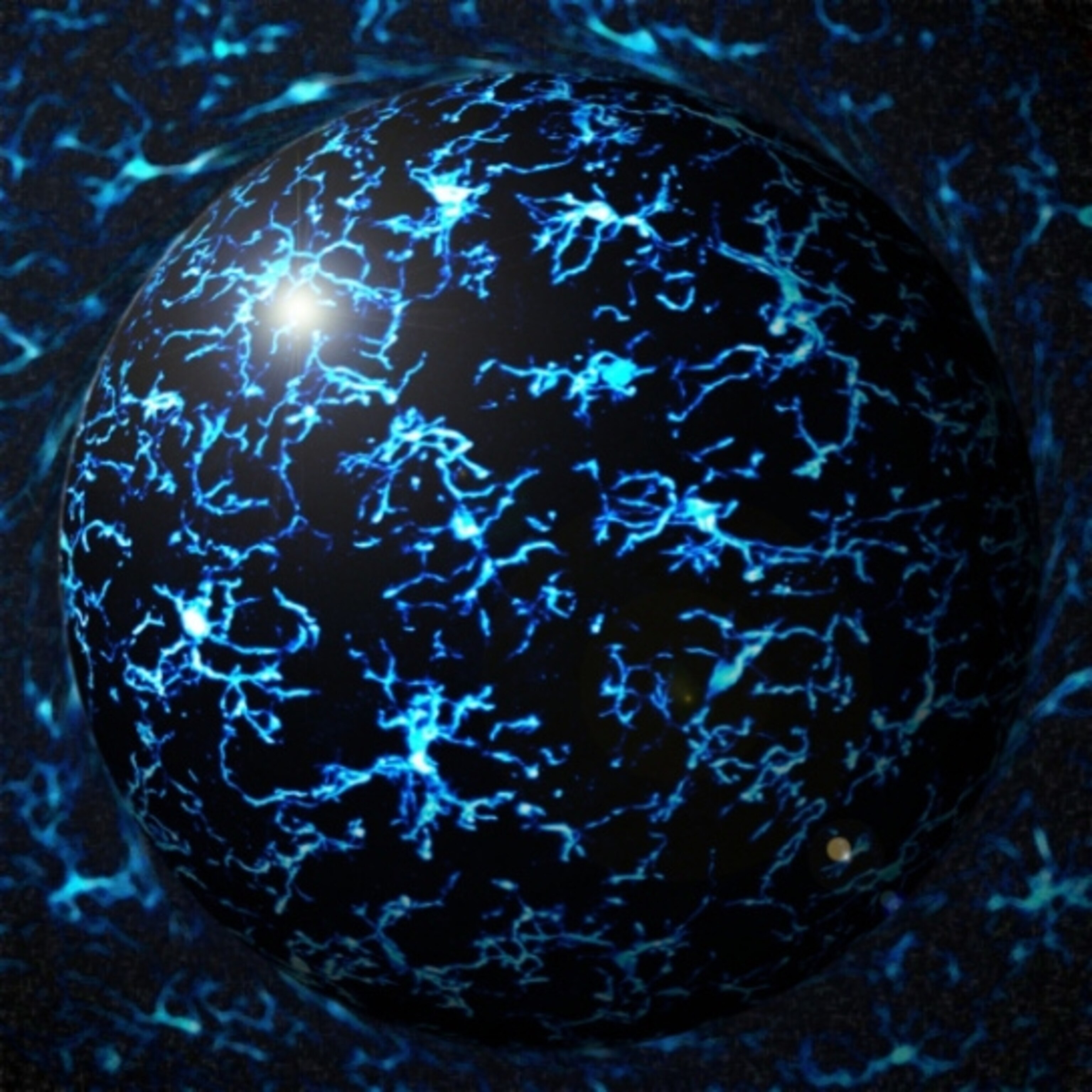

Microglia straddle the fields of immunology and neuroscience. The cells begin in the yolk sac as part of the immune system, in the same cellular lineage as the macrophages of the blood. But over the first week of life, microglia migrate to the brain and make it their permanent home. They become vigilant soldiers, constantly patrolling for any sort of cellular invader, whether a bacterial infection or a pile of protein trash. Once they spot the offender, they snap into action, morphing from their spindly resting state into a fat blob so they can literally eat the problem.

Microglia’s role in the immune system has been known for more than a century. But the cells’ popularity skyrocketed in the last decade or so, after being linked to neurodegenerative disease, developmental disorders, and plasticity in the healthy brain. The vast majority of these microglia studies rely on laboratory mice. And that’s not good, according to neuroscientists Amy Smith and Mike Dragunow.

As they point out in the March issue of Trends in Neuroscience, mice and people are separated by 65 million years of evolution, leading to many genetic differences. What’s more, because of pressure from evolving pathogens, “the immune system is a ‘hot-spot’ for evolutionary changes,” the researchers write.

They describe many molecular differences between mouse and human microglia. To name just a few: The interferon-gamma receptor, which binds to an important immune molecule, is expressed by microglia in the mouse brain, but not in the human brain. Another immune player, the toll-like receptor 4, is expressed at high levels in mouse microglia but at low levels in human microglia. When exposed to valproic acid (an anti-seizure drug), mouse microglia die but human microglia don’t. In mouse models of Alzheimer’s disease, microglia seem virile, engulfing healthy neurons and contributing to the brain’s degeneration. But in postmortem brain tissue from people with the disease, the microglia are limp and atrophied, which might suggest that they initially play a protective role.

With differences like those (and more), no one should assume that something discovered in rodents will be applicable to people. I suspect most scientists would agree with that statement. But Smith and Dragunow go a provocative step further, arguing that before publication, rodent studies should be verified in human brain cells.

Those sorts of comparisons certainly wouldn’t be easy, and may even be useless, due to several challenges surrounding the study of human microglia. Or so counters neuroscientists Linda Watkins and Mark Hutchinson in the April issue of the same journal.

For most researchers, the only way to closely examine human microglia is by taking the cells from a dead brain. Watkins and Hutchinson argue that the procedures for harvesting and storing postmortem tissue may well have an effect on microglia (which, after all, are programmed to notice and react to changes in the environment). Most commercially available tissue comes from first-trimester abortions, which damage the fetus’s skull in order to remove it. Then the brain tissue has to be “collected, packaged, and shipped to a processing center, typically arriving 1–2 days later,” Watkins and Hutchinson write. Everything the tissue is exposed to in the interim — changes in temperature, exposure to chemicals, sudden movements, rough handling — may change the microglia. One fetal brain results in anywhere from 3 to 12 vials of microglia, according to the scientists. That variability alone shows how much the brain can be damaged during its preservation.

Even if fetal brain tissue sustained no damage during processing, there’s still the issue of age. Microglia undergo many important molecular changes during late fetal development and even after birth, so first-trimester cells could look and behave very differently than those at a later stage, regardless of species.

These issues could be dealt with, to some extent. Scientists could be ever-so-careful when processing human brain tissue, and be sure to compare cells at the same stage of development. And, as Smith and Dragunow point out in their counter to the counter-argument, fetal brains aren’t the only option. Some brain banks store tissue from adults who suffered from various diseases during their lifetime. “By directly studying microglia in the diseased adult human brain,” they say, “the disease context can be studied and the information garnered used to help validate animal models of these diseases.”

But a much trickier problem plagues every study, no matter the species, attempting to study a disease state in the laboratory: variability. For the human fetal tissue, each sample of microglia is pricey — around $500 — and so researchers tend to limit their studies to cells from a single fetus. Yet one fetus could be very different, both genetically and environmentally, from the next. For example, usually nothing is known about the fetus’s mother, such as her medical history or diet, which could have a big influence on the functioning of her baby’s brain. The same argument applies for the adult brains, and maybe more so: An elderly person’s brain is the result of some combination of exposures and experiences acquired over decades.

The mouse-versus-human question goes way, way beyond microglia. More than 80 percent of drugs that seem promising in mouse models end up failing in clinical trials, as Steve Perrin pointed out in a Nature commentaryNature commentary last month. Perrin’s organization, the ALS Therapy Development Institute, has tested more than 100 compounds in a mouse model of ALS (a motor neuron disease). None of them worked, even those that other research groups had shown to slow disease. The same trend curses the cancer field, in which only 5 percent of drugs that seem to work in animal studies end up making it to commercial licensing.

I suppose this post hasn’t painted a very rosy picture of the state of basic medical research. I don’t think all is hopeless, though. Perrin suggests that researchers look more carefully at symptoms that crop up in animal models of disease. If they don’t closely mimic those seen in people, then they’re not likely to be a good model for testing treatments. He also argues that spurious findings can be avoided by more rigorous statistical analyses and a stronger focus individual variability.

Advancements are happening on the human side, too. Thanks to amazing new technologies in the stem-cell field, researchers can now take bits of a patient’s skin and reprogram it into pretty much any kind of cell in the body, including neurons and microglia. Researchers (or even robots) could then test a battery of drugs on the cells to see if any lead to noticeable improvements.

Still, there’s a sobering lesson in here for all of us who write about cures that happen in laboratory studies, and for everyone who reads the headlines about them. Lots of treatments will cure diseases in mice or Petri dishes, but the vast majority won’t pan out in people.

*

Related stories:

Best Cells Ever (Only Human)

Supporting the Support Cells in Lou Gehrig’s Disease (Only Human)

The Constant Gardeners (Nature)

Brain Imaging Study Points to Microglia as Autism Biomarker (SFARI.org)

Go Further

Animals

- Soy, skim … spider. Are any of these technically milk?Soy, skim … spider. Are any of these technically milk?

- This pristine piece of the Amazon shows nature’s resilienceThis pristine piece of the Amazon shows nature’s resilience

- Octopuses have a lot of secrets. Can you guess 8 of them?

- Animals

- Feature

Octopuses have a lot of secrets. Can you guess 8 of them?

Environment

- This pristine piece of the Amazon shows nature’s resilienceThis pristine piece of the Amazon shows nature’s resilience

- Listen to 30 years of climate change transformed into haunting musicListen to 30 years of climate change transformed into haunting music

- This ancient society tried to stop El Niño—with child sacrificeThis ancient society tried to stop El Niño—with child sacrifice

- U.S. plans to clean its drinking water. What does that mean?U.S. plans to clean its drinking water. What does that mean?

History & Culture

- Gambling is everywhere now. When is that a problem?Gambling is everywhere now. When is that a problem?

- Beauty is pain—at least it was in 17th-century SpainBeauty is pain—at least it was in 17th-century Spain

- The real spies who inspired ‘The Ministry of Ungentlemanly Warfare’The real spies who inspired ‘The Ministry of Ungentlemanly Warfare’

- Heard of Zoroastrianism? The religion still has fervent followersHeard of Zoroastrianism? The religion still has fervent followers

- Strange clues in a Maya temple reveal a fiery political dramaStrange clues in a Maya temple reveal a fiery political drama

Science

- NASA has a plan to clean up space junk—but is going green enough?NASA has a plan to clean up space junk—but is going green enough?

- Soy, skim … spider. Are any of these technically milk?Soy, skim … spider. Are any of these technically milk?

- Can aspirin help protect against colorectal cancers?Can aspirin help protect against colorectal cancers?

- The unexpected health benefits of Ozempic and MounjaroThe unexpected health benefits of Ozempic and Mounjaro

- Do you have an inner monologue? Here’s what it reveals about you.Do you have an inner monologue? Here’s what it reveals about you.

Travel

- Follow in the footsteps of Robin Hood in Sherwood ForestFollow in the footsteps of Robin Hood in Sherwood Forest

- This chef is taking Indian cuisine in a bold new directionThis chef is taking Indian cuisine in a bold new direction

- On the path of Latin America's greatest wildlife migrationOn the path of Latin America's greatest wildlife migration

- Everything you need to know about Everglades National ParkEverything you need to know about Everglades National Park